Your users are in the crosshairs of the most dangerous attackers out there. Follow these steps to better protect you. […]

Not a day seems to go by without another ransomware attack making headlines. And where do many of these attacks begin? In your users ‘ email inboxes.

By now, you know that your users are both your first line of defense and your weakest link. Not only do you need to add an additional spam filter to all the emails that come into your office, but you also need to train your users to recognize when they are the victims of a phishing attack. In addition, you should harden the operating system to be more resistant to attacks. Recently, some of these recommendations have been proposed by Microsoft.

Here are some important ways you can protect your users from recent spear phishing campaigns:

Make sure that all emails go through some kind of filtering system. Whether it’s a local mail server or a cloud-based email service, you need to have a filtering system that looks for attack patterns. Even if you still have local mail servers, you can see patterns with a service that exchanges information with other servers. Often, these email hygiene platforms also offer the storage and forwarding of emails in case something happens to your local email server. Installing such a solution is a necessity for anyone who uses email servers.

If budget is an issue, consider exploring open source, community, or free solutions to better protect your business. Solutions such as Security Onion can be added to your network as a Linux distribution for threat hunting, enterprise security monitoring, and log management. Recently, version 2.3.50 for the Security Onion platform was released along with training videos. Snort is another open source platform that can be added to your network to provide additional protection and monitoring capabilities.

Prioritize patching based on the potential impact on your network. In many companies, patches are not installed immediately, but are deployed only after a full audit. This can mean weeks go by without any updates being provided. Patches should be installed primarily for those users who have either been affected in the past or could be considered a potential target.

Check all the software installed on the system to make sure it is all supported and maintained by the manufacturers. Pay special attention to patches for the operating system, as well as any browser-based tools that may be used, such as software for viewing PDF files.

Make sure your patching team is aware of any vulnerabilities that are actively being attacked to ensure your business is protected. Check if the workstations have been patched in the past and if there have been any side effects with the updates. If the workstations have not had problems with patches in the past, you can speed up deployment.

The use of patching tools such as Config Manager, Batch patch, or any number of patch management tools should be investigated. For the rest of 2021, make it your goal to distribute updates faster on the affected workstations. If you usually wait a month for updates to be delivered, you should try to do them in three weeks. Then accelerate your testing processes to achieve deployment in two weeks. Shorten the time window between the release of updates and the delivery of updates.

Restrict the use of browsers and file sharing platforms. This limitation can be tedious, but it can be necessary to best protect users from targeted attacks. Discuss these restrictions with your employees to make sure they understand the risks of file sharing platforms. In my own company, we recently adjusted our policies to be more restrictive.

Too many of the file sharing platforms can be faked, making it easier for attackers to trick their users into opening a malicious link. Standardize on a specific platform and communicate this to your customers, partners and users. Make sure the portals are branded and the processes explained and documented, and make sure your employees know they can’t bypass the process.

Enable attack surface reduction rules for your Windows 10 implementations. Microsoft 365 E5 and Enterprise licenses allow you to monitor and enforce ASR rules, but even with a professional-only network, you can enable protection to better protect your network. In particular, enable the following attack surface reduction rule to block or verify activities related to this threat.

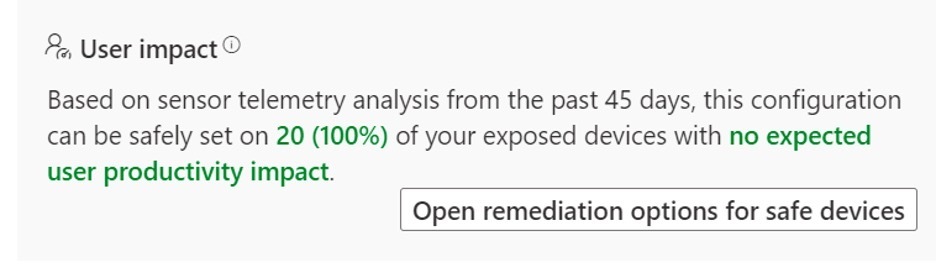

Enable the Block JavaScript or VBScript rule to start downloaded executable content. As Microsoft notes in its console, this is not a common use case for businesses, but enterprise applications sometimes use scripts to download and launch installers. This security control only applies to machines running Windows 10, version 1709 or later, and Windows Server 2019. If you have Microsoft 365 ATP access, you can check the console to see what impact, if any, is expected on your network.

(c) Susan Bradley

(c) Susan Bradley

This user impact report can alert you immediately if you can use the recommended protection with little or no impact on your environment. If you do not have access to this report, set the ASR rules to the Warning setting to determine if you are affected.

Note that warning mode is not supported for the following three attack reduction rules when you configure them in Microsoft Endpoint Manager (if you configure the attack reduction rules using Group Policy, warning mode is supported):

- Blocking JavaScript or VBScript to launch Downloaded executable content (GUID d3e037e1-3eb8-44c8-a917-57927947596d)

- Blocking Persistence by WMI Event Subscription (GUID e6db77e5-3df2-4cf1-b95a-636979351e5b)

- Use Advanced Protection against Ransomware (GUID c1db55ab-c21a-4637-bb3f-a12568109d35)

To enable using Group Policy, open the Group Policy Management Console, right – click the Group Policy object you want to configure, and select Edit. In the Group Policy Management Editor, go to Computer Configuration and select Administrative Templates. Expand the tree to Windows components, then go to “Microsoft Defender Antivirus”, then” Microsoft Defender Exploit Guard”, and then”Attack Surface Reduction”.

Select ” Configure attack area reduction rules “and select”Enabled”. Then, in the “Options” section, you can set the individual state for each rule. First, enter the value “d3e037e1-3eb8-44c8-a917-57927947596d” and the value “6”, then “1”.

Your users are in the crosshairs of the best attackers out there. Even without an E5 license, make sure you use these ASR rules to protect your workstations.

* Susan Bradley writes for CSO.com.