Comment on Low-Code Trends Why 2021 will be the Year of Low-code

The corona crisis has given low-code technology a major boost in 2020. She is taking this momentum into the New Year and will continue to assert herself on a broad front – and thus enable some new careers.

Companies on the topic

Will software development based on the modular principle finally take off in the New Year?

Will software development based on the modular principle finally take off in the New Year?

The Covid-19 pandemic has brought to the attention of many companies their backlog in digitization in a drastic way. In order to maintain their business operations and meet the needs of their employees and customers, they were forced to quickly develop new applications to shoot down existing process gaps.

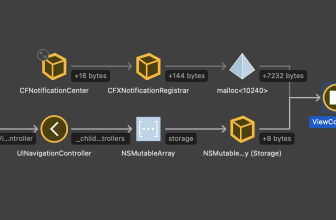

As a result, low-code technology became more and more mainstream, as it was able to play to its strengths under these special circumstances. It makes it possible to quickly create software with the help of reusable templates, configurable components and proven best practices, while complying with the requirements of our own corporate governance.

This also allows so-called citizen developers to write software: employees from the specialist departments who are not actually developers at all. The positive experience many companies have had with low-code in the past year will further fuel the rapid growth of this technology in 2021. And this in turn will lead to a comprehensive implementation of DevOps.

Citizen outstaffing developers are a real secret weapon when it comes to bringing IT and business together. You can talk to colleagues from the IT department on an equal footing, speak the same language as you do and thus work closely with them.

In 2021, Citizen developers will therefore be instrumental in breaking up traditional silos, quickly scaling solutions and bringing shadow IT out of the shadows. In turn, this gives IT the opportunity to develop from a mere cost center into a strategic driver of digital transformation.

How will the low-code market develop?

Companies will be more demanding in their investments in low-code technologies in 2021. The increasing spread of this technology ensures that their level of awareness and understanding of the companies is also increasing. This is accompanied by clearer requirements and more concrete expectations.

In the low-code market, the mature solutions will therefore clearly stand out from the rest in 2021. In addition, companies will focus more on entire ecosystems by adding additional tools, frameworks and best practices to their low-code solutions. For example, by implementing design thinking and introducing tools such as the Mural virtual whiteboard, you can strengthen your initial low-code investments and create the foundation for further growth.

Last but not least, Low-Code 2021 will open up completely new professional paths. The Covid-19 pandemic has hit the economy hard in many areas and many professionals have lost their jobs. However, developers are still scarce and in high demand. Low-code technology can significantly lower the barriers for people who need or want to reorientate themselves professionally.

Florian Weber (Picture: Pegasystems)

A lack of development talent will no longer be an obstacle to entry into application development in 2021. This opens up completely new career options. Above all, professionals who can combine low-code development with soft skills such as design thinking are looking for the best opportunities.

* Florian Weber is Principal Solutions Consultant at Pegasystems.

(ID:47068903)